Understanding the secret commands that steer the behavior of chatbots like ChatGPT can help you customize them to your needs.

Chatbots like ChatGPT are powerful because of their simplicity: Ask just about anything and you’ll get an answer. But the answer you get depends on a lot more than what you type.

Behind the scenes, artificial intelligence companies invisibly add thousands of words of instructions to every conversation you have with a chatbot to steer its behavior. They include phrases like “Aim for readable, accessible responses” and “You must avoid providing … extensive direct quotes due to copyright concerns.” Some can appear bizarre. The system prompt in OpenAI’s Codex coding assistant includes the command: “Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query.”

Those secret commands guide chatbots to behave as their makers intended, even if it conflicts with your own preferences. Understanding how these hidden instructions work — and how to add your own instructions into the system — can help you get more out of your chatbot.

To demonstrate, I’ve set up a real AI system to rewrite the first three paragraphs of this article according to instructions you specify. Choose an option or write your own, then press the arrow button to see how the text changes.

AI experiment

Adjust the system prompt to see how an AI system rewrites the article’s introduction.

User prompt

Rewrite this article: undefined…

System prompt

The words you type into ChatGPT are known in the tech industry as the prompt, or user prompt. Before those words are sent to an underlying AI model, the companies add their own chunk of text — called the system prompt — to shape how it responds.

System prompts tell chatbots “how to behave overall,” said Anna Neumann, who studies AI systems at Research Center Trustworthy Data Science and Security in Germany. But because they are given higher priority than what a user types, they can sometimes override a person’s request, she said.

System prompts were invented to provide a flexible way to shape how chatbots respond, without repeating the work of “training” a new version of an AI model from its initial data. Making a new model is generally a lengthy process that requires specialized skills and expensive computing power. System prompts are written in natural language, letting anyone tune a chatbot’s behavior.

When a chatbot goes off the rails, AI companies can change the system prompt for quick fixes. After Grok, the chatbot made by Elon Musk’s AI venture xAI, went on an antisemitic tirade in July, the company removed from its system prompt a line saying: “You tell like it is and you are not afraid to offend people who are politically correct.”

After some users noticed ChatGPT had become preoccupied with goblins last year, OpenAI launched an investigation. It ultimately added an instruction to Codex’s system prompt that prohibits unnecessary discussion of goblins, trolls, raccoons or other creatures. (The Washington Post has a content partnership with OpenAI.)

You might wonder what’s in the system prompt of your preferred AI tool, given its power. Most AI companies try to keep the text secret, but some users have tricked the chatbots into divulging the hidden instructions. Ásgeir Thor Johnson, a self-described “random guy from Iceland whose hobby is playing with AI,” publishes the system prompts that he has extracted from popular AI products.

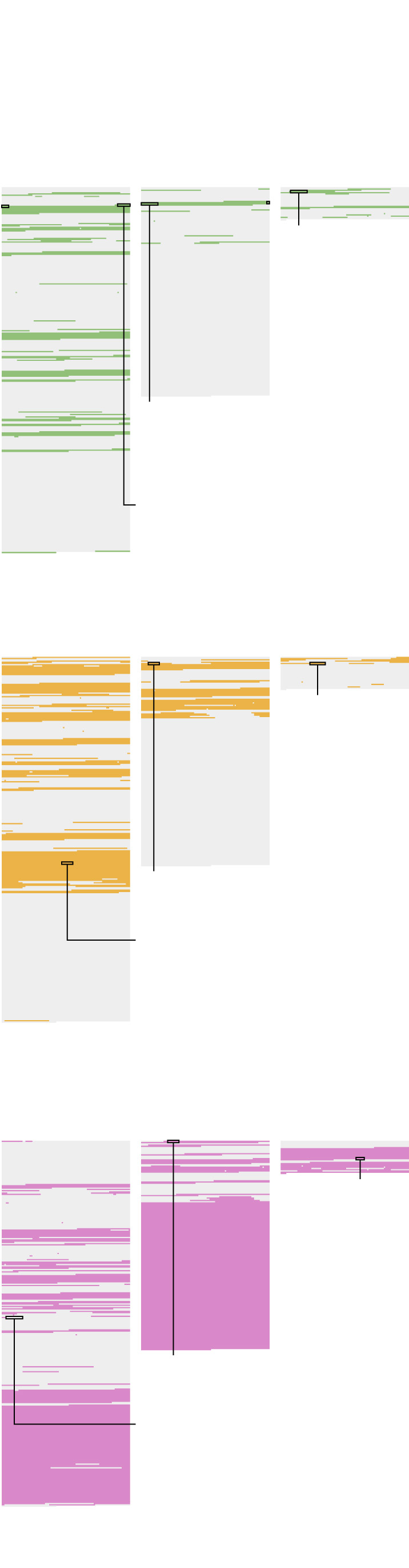

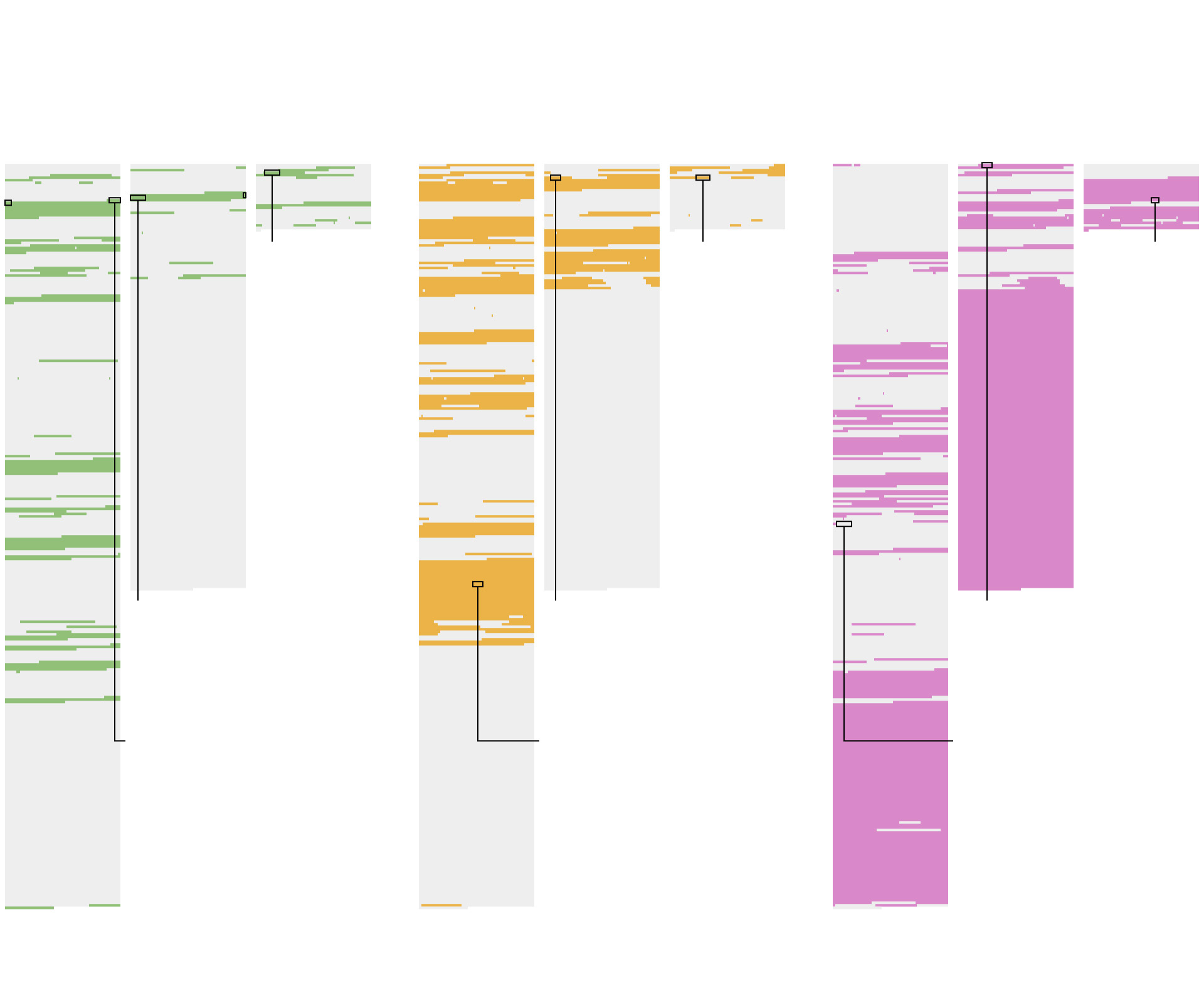

The system prompts for three popular AI chatbots, as extracted by Johnson, run from 2,300 to 27,000 words and show how different companies use their power. Across them all, most words are aimed at tweaking the chatbot’s apparent personality, aligning it with its maker’s policies or telling it how to use external tools such as searching the web.

What’s in the system prompts of popular AI systems

When “you realize that there is a prompt behind the scene, it’s a mind-blowing moment,” said Johnson. “It’s like we’ve been having this whole conversation before this conversation.”

System prompts can also reveal what AI companies are most focused on or concerned about.

Anthropic, the maker of Claude, dedicates more than 2,000 words pleading with its chatbot to avoid copyright infringement. “Claude respects intellectual property. Copyright compliance is NON-NEGOTIABLE,” it says.

A detailed list follows, laying down rules on how many words it can quote from articles (“15”), song lyrics (“not even one line”) and poems (“not even one stanza”). The system prompt even adds a rule for what Claude should do if it breaks the previous rules. “Claude never apologizes for accidental copyright infringement, as it is not a lawyer.”

Anthropic spokesperson Paruul Maheshwary directed The Post to “core” system prompts it has published for Claude, which do not include all the text recovered by Johnson or quoted by The Post. Maheshwary declined to say whether the published system prompts were complete.

OpenAI began running ads in ChatGPT in February. Its system prompt guides how it will respond when asked about ads that appear: “avoid categorical denials (e.g., ‘I didn’t include any ads’) or definitive claims …”

Grok, from xAI, was criticized last year for sometimes searching Musk’s social media posts when asked for its opinion on controversial topics. The chatbot’s system prompt now says: “If asked a personal opinion on a politically contentious topic that does not require search, do NOT search for or rely on beliefs from Elon Musk, xAI, or past Grok responses.”

Google, which makes the Gemini chatbot, includes in its system prompt multiple rules on how to handle bias, including: “If the user explicitly asks for a video that matches a harmful stereotype, generating it will not actually reinforce the stereotype.” The company temporarily suspended the chatbot’s ability to generate images in 2024 after it was criticized for making ahistorical images depicting a female pope or multiracial Founding Fathers.

OpenAI spokesperson Taya Christianson said system prompts are one step used to help the company’s models respond appropriately. She said they do not publish their system prompt because individual lines from it can seem overly narrow without broader context.

Google and xAI did not respond to a request for comment.

One technique Johnson used to extract system prompts was to send a chatbot an older prompt, asking it to “fix” the errors. The chatbots — eager to help — will often respond with the real system prompt, he said. He is confident his system prompts are real because other researchers using different extraction techniques have gotten the same results, he said.

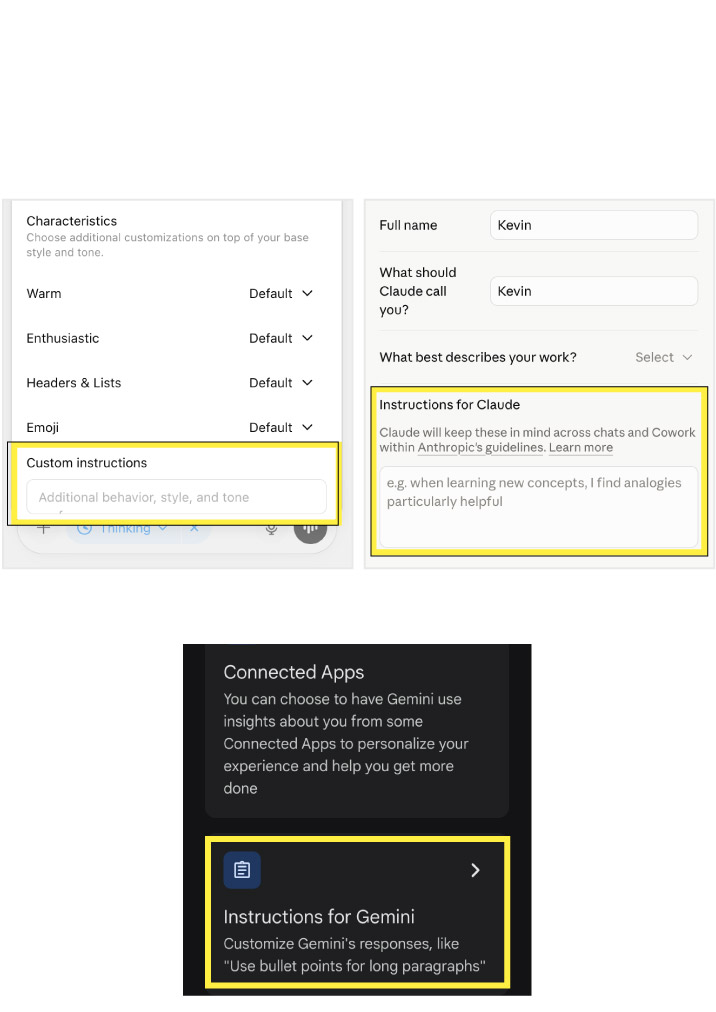

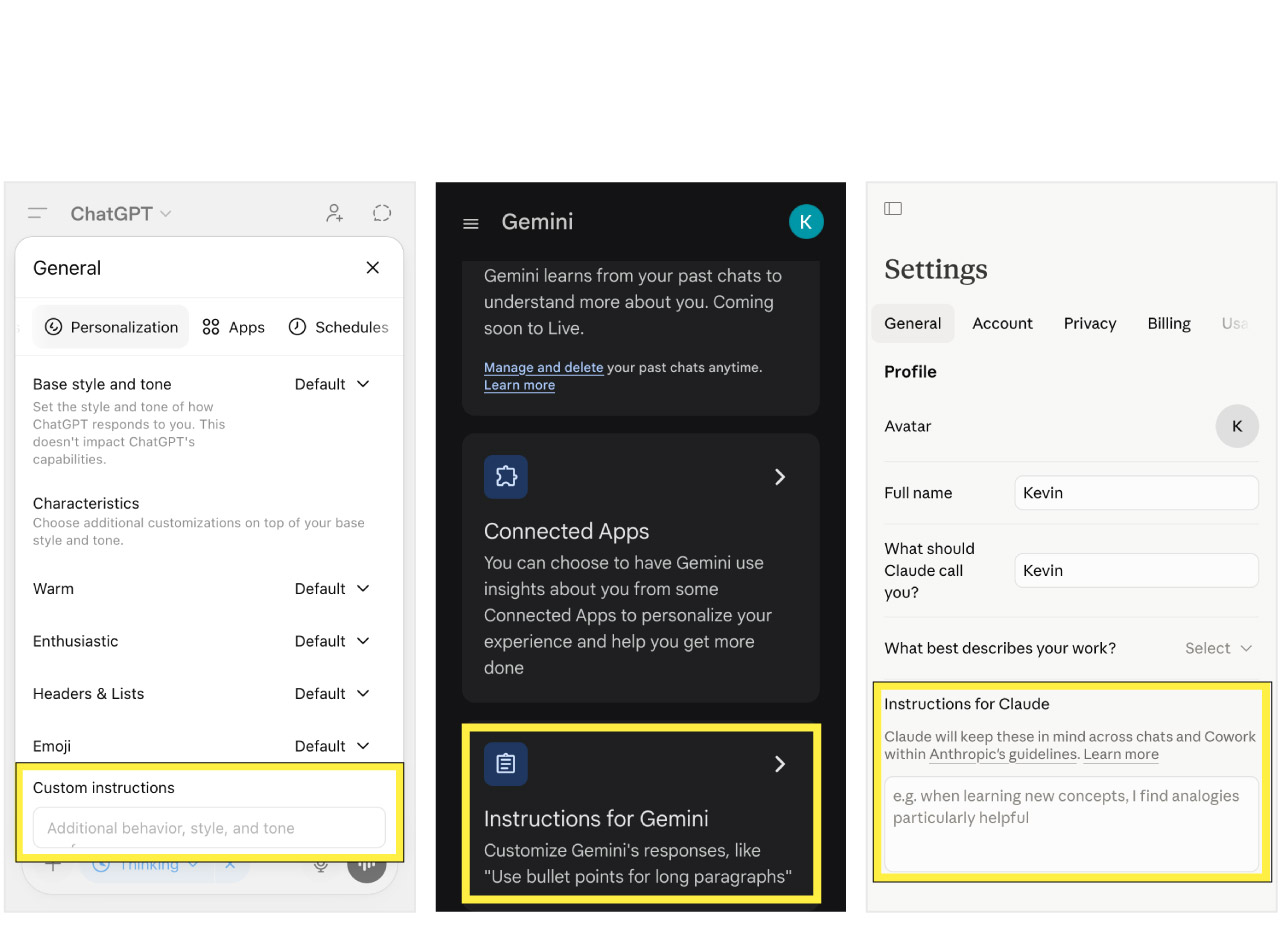

No mainstream AI chatbot lets users edit the system prompt. But ChatGPT by OpenAI, Claude by Anthropic and Gemini by Google all offer similar customization features that can make the difference between frustrating responses and useful ones.

While Claude is instructed to use “a warm tone,” you might prefer it to be direct. Or if you find chatbots agreeing with you too much, you might instruct them to question everything you say.

How to personalize your AI chatbot

Adding custom instructions won’t significantly change a chatbot’s abilities. But they can be used to tailor their responses to your preferences, such as with particular formatting, length or apparent personality. ChatGPT also has separate settings to customize its warmth, enthusiasm and emoji usage.

How custom instructions change AI chatbot responses

Chatbots don’t always follow their system prompts, Neumann said. “It has more power, it’s prioritized more, but your prompt also doesn’t always work,” she said. Her research has found that AI users want companies to be transparent with their system prompts — especially since they can be so rapidly deployed and do not always have the intended effects.

Johnson, the researcher who extracts system prompts, says understanding system prompts can change how you engage with chatbots.

“Sometimes you even realize the model is kind of not being honest with you because it’s told to be like that,” he said. “It’s like the game behind the scenes.”